Introduction

Kubernetes is the foundation of modern cloud infrastructure, offering scalability, automation, and rapid deployments. However, without cost optimization, Kubernetes can lead to excessive cloud spending. As businesses scale, uncontrolled resource allocation, inefficient scaling, and idle workloads can drastically increase cloud costs.

By 2025, Kubernetes cost optimization will be a necessity, not an option. Companies that fail to prioritize it risk budget overruns, wasted resources, and reduced operational efficiency. In this blog, we'll explore why Kubernetes cost optimization should be a top priority, common cost challenges, and practical strategies to optimize expenses while maintaining performance.

The Growing Challenge of Kubernetes Costs

Kubernetes is designed to efficiently manage containerized applications, but costs can spiral out of control due to:

- Overprovisioning resources ??? Assigning more CPU and memory than needed, leading to unnecessary expenses.

- Idle workloads ??? Unused or underutilized resources that continue to consume budget without delivering value.

- Lack of visibility ??? Difficulty in tracking spending across clusters and workloads, making it harder to optimize costs.

- Inefficient scaling policies ??? Poorly configured autoscaling can lead to underutilized nodes or sudden spikes in expenses.

- Unoptimized storage and networking ??? Persistent volumes, redundant backups, and excessive egress costs add up quickly.

- Orphaned or zombie resources ??? Leftover deployments, unused containers, and lingering storage that continue to accrue costs.

As organizations expand their Kubernetes usage, these issues compound, making cost management a crucial focus area in 2025.

Why Kubernetes Cost Optimization is Essential in 2025

1. Accelerate Innovation by Reducing Troubleshooting

When Kubernetes infrastructure isn't optimized, DevOps and engineering teams waste countless hours troubleshooting infrastructure issues. Optimizing resource allocation empowers developers and DevOps teams to spend less time firefighting and more time on strategic innovation. Efficiently managed infrastructure allows faster product iterations, boosts agility, and shifts focus from resolving infrastructure waste to building impactful new features.

2. Sustainability and Green Computing

In 2025, organizations are under increasing pressure to reduce their carbon footprint. Cloud providers are investing in sustainability initiatives, and companies must align with these efforts. Optimizing Kubernetes costs often means using fewer resources more efficiently, reducing energy consumption, and contributing to a greener planet.

3. Economic Uncertainty & Budget Constraints

With global economic uncertainties, businesses are looking to cut unnecessary expenses while maintaining operational efficiency. Unoptimized Kubernetes environments can quickly drain budgets, leading to financial strain. By implementing cost optimization strategies, companies can maximize cloud investments and reduce financial risks.

4. Competitive Advantage Through Cost-Efficient Scaling

Startups and enterprises alike need to scale their applications efficiently while keeping costs under control. Companies that master Kubernetes cost optimization will gain a competitive edge by strategically allocating budgets, improving agility, and reducing unnecessary cloud expenditure.

5. Cloud Costs Are Rising

With more businesses migrating to the cloud, demand for cloud services is increasing, leading to higher prices from major providers like AWS, Azure, and Google Cloud. Companies that don't optimize Kubernetes costs will feel the financial strain, especially as cloud providers introduce more complex pricing models.

Strategies to Optimize Kubernetes Costs in 2025

1. Right-Size Your Containers

Over-provisioning is one of the biggest Kubernetes cost drains - allocating more CPU or memory than a container actually needs. It's like renting a moving truck twice as big as required; you're paying for empty space. In Kubernetes, that unused space is idle CPU and memory reserved 'just in case.'

Why Rightsizing Matters

Adjusting resource requests and limits to match actual usage prevents waste while ensuring smooth operation. Many companies cut costs significantly by simply right-sizing workloads. For example, reducing a container from 4 vCPUs to 2 vCPUs???if it only uses 1 vCPU???can halve compute costs without performance loss.

How to Right-Size Effectively

- Monitor Usage: Use Kubernetes metrics (Prometheus, etc.) to track actual CPU/memory consumption. Tools like Kubecost and Goldilocks can suggest optimized values.

- Automate with VPA: The Vertical Pod Autoscaler (VPA) adjusts requests dynamically based on real usage. Run it in recommendation mode first for safe testing.

- Enforce Policies: Companies like Adidas automated VPA enforcement, reducing CPU and memory use by 30% and cutting dev/staging cluster costs by up to 50%. IronScales achieved 21% cost savings with automated rightsizing.

2. Use Cost-Efficient Infrastructure

Infrastructure choices significantly impact Kubernetes costs. Two identical clusters can have vastly different costs based on instance selection. Optimize the infrastructure layer to save money

Leverage Spot Instances:

- Cloud providers offer spot instances (AWS Spot, Azure Spot, GCP Preemptible VMs) at 70-90% discounts.

- Kubernetes can handle spot node terminations???pods reschedule automatically.

- Hybrid approach: Run 70-80% of nodes on spot and keep critical workloads on on-demand instances.

- Use taints/tolerations or node selectors to assign spot usage only to non-critical workloads (e.g., batch jobs).

Reserved Instances / Savings Plans:

- If workloads are steady, commit to AWS Savings Plans or Reserved Instances for 30-50% cost savings over on-demand pricing.

- Calculate baseline cluster size (e.g., 10 always-needed nodes) and commit only to that, keeping autoscaling flexible.

Optimize Instance Sizes:

- Choose newer-generation instances for better price/performance.

- Use ARM-based instances (e.g., AWS Graviton2) for lower per-core costs.

- Match instance types to workload needs (memory-optimized vs. compute-optimized).

Serverless Kubernetes:

- AWS Fargate (EKS) and GKE Autopilot eliminate node management???pay only per vCPU/hour used.

- No idle node costs, ideal for spiky workloads or low utilization.

- Google claims GKE Autopilot saves up to 85% in Kubernetes TCO by handling rightsizing and bin-packing automatically.

- Caution: Serverless may cost more for consistently high usage due to premium pricing.

Offload to Managed Services:

- The cheapest Kubernetes resource is the one you don't run.

- If your cluster handles logging, monitoring, or databases, check if a managed cloud service is more cost-effective.

3. Gain Visibility with Cost Monitoring Tools

You can't reduce what you can't see. Kubernetes abstracts infrastructure, making it hard to attribute costs to specific teams or apps. Cost monitoring tools solve this by providing real-time insights into where your cloud spend is going.

Use Dedicated Kubernetes Cost Tools:

- OpenCost (open-source) ??? DIY cost allocation.

- Kubecost ??? Enterprise-ready, real-time cost breakdowns with savings recommendations.

- AWS Cost Explorer ??? AWS-native tool to analyze EKS costs using Kubernetes tags/labels.

- CloudZero ??? SaaS platform aligning Kubernetes costs with business metrics.

Why It Matters:

- Identify which teams, namespaces, or services drive the highest costs.

- Detect unused resources (e.g., zombie deployments).

- Integrate cloud billing (AWS, GCP, etc.) with Kubernetes cost tracking for full transparency.

FinOps Integration:

Cost monitoring is central to FinOps???a framework that makes cloud spend a shared responsibility across engineering, finance, and product teams. By providing developers with cost data and clear targets, companies can drive smarter, cost-conscious decisions.

Best Practices:

- Set budgets & alerts for Kubernetes spend.

- Conduct weekly cost reviews to catch inefficiencies early.

- Bake cost considerations into deployment decisions for ongoing optimization.

4. Optimize Storage and Networking

Storage and networking costs in Kubernetes can spiral out of control if left unchecked. Optimizing these areas ensures you're not paying for unused resources or excessive data transfer.

Storage Optimization

- Identify and Delete Unused Persistent Volumes ??? Over time, leftover persistent volumes (PVs) from decommissioned applications or old deployments can accumulate, leading to unnecessary costs. Regular audits can help reclaim storage and reduce spending.

- Use Object Storage Instead of Block Storage ??? Object storage solutions like Amazon S3, Google Cloud Storage, and Azure Blob Storage offer a more cost-effective alternative to block storage (e.g., EBS, Persistent Disk) for storing logs, backups, and static assets. Object storage is cheaper, scales easily, and eliminates the need for expensive, high-performance block storage unless truly necessary.

- Right-Size Storage Classes ??? Many Kubernetes deployments use high-performance SSD-backed storage by default, even for workloads that don't require it. Choosing cost-effective storage classes (e.g., standard HDD-based storage for infrequent access) can significantly lower costs.

Networking Optimization

- Minimize Data Transfer and Egress Costs ??? Cloud providers charge for inter-region and internet-bound data transfers. Optimizing network policies, using compression, and keeping traffic within a single region can drastically cut egress costs.

- Leverage Internal Load Balancers ??? For services that don't need external exposure, using internal load balancers instead of public-facing ones helps avoid unnecessary data transfer charges.

- Optimize Service Mesh and API Gateway Usage ??? If using a service mesh (e.g., Istio, Linkerd) or API gateways, ensure they're configured efficiently to avoid excessive traffic routing, which can increase costs.

5. Automate Scaling with AI & Machine Learning

Traditional auto-scalers react to spikes in demand but often lack the intelligence to predict future workload requirements. AI-driven scaling solutions take automation to the next level by learning usage patterns and optimizing resource allocation dynamically.

Predictive Auto-Scaling

- AI-Based Auto-Scalers ??? Machine learning models analyze historical data to predict traffic spikes before they happen, proactively adjusting resources to prevent over-provisioning or downtime. Tools like Karpenter (AWS), CAST AI, and StormForge offer intelligent scaling that optimizes CPU, memory, and node allocation.

- Dynamic Horizontal and Vertical Scaling ??? AI-based Horizontal Pod Autoscalers (HPA) and Vertical Pod Autoscalers (VPA) dynamically adjust pod counts and resource requests based on real-time demand, preventing unnecessary over-provisioning.

Serverless Kubernetes for Cost Efficiency

- AWS Fargate, GKE Autopilot, Azure Container Apps, and AWS EKS with Fargate provide serverless Kubernetes, eliminating the need for manual provisioning. These platforms run workloads only when needed, significantly reducing costs by charging only for execution time rather than idle resources.

- Spot Instances for Non-Critical Workloads ??? AI-driven auto-scalers can dynamically shift workloads to spot instances, taking advantage of low-cost, short-lived compute capacity.

Proactive Monitoring & Scaling Adjustments

- Traffic Pattern-Based Scaling ??? Instead of scaling reactively based on CPU/memory thresholds, AI-driven monitoring anticipates demand surges and scales up before peak load hits.

- Load-Aware Scheduling ??? AI-enhanced Kubernetes schedulers distribute workloads more efficiently across clusters, reducing fragmentation and ensuring optimal resource utilization.

6. Eliminate Idle & Unused Resources

Even with rightsizing and autoscaling, idle resources still lurk in Kubernetes, silently inflating costs - like leaving your car running overnight. Eliminating them leads to quick savings in 2025's tight budget climate.

What to Look For:

- Idle Nodes & Overprovisioned Node Pools: Scale down underutilized node pools or consolidate workloads.

- Unattached Volumes & IPs: Orphaned persistent volumes (PVs) and load balancers from deleted services still incur charges - regularly audit and clean them up.

- Old Deployments & Test Environments: Tear down unused staging environments; Kubernetes makes recreation easy.

- Excess Capacity in Non-Prod: Implement "sleep mode" for dev/test clusters (e.g., auto-scale to zero at night), cutting costs by 50%+.

- Idle CPU in Workloads: Use tools like Kubecost to detect over-allocated or underutilized resources, then adjust requests or scale down.

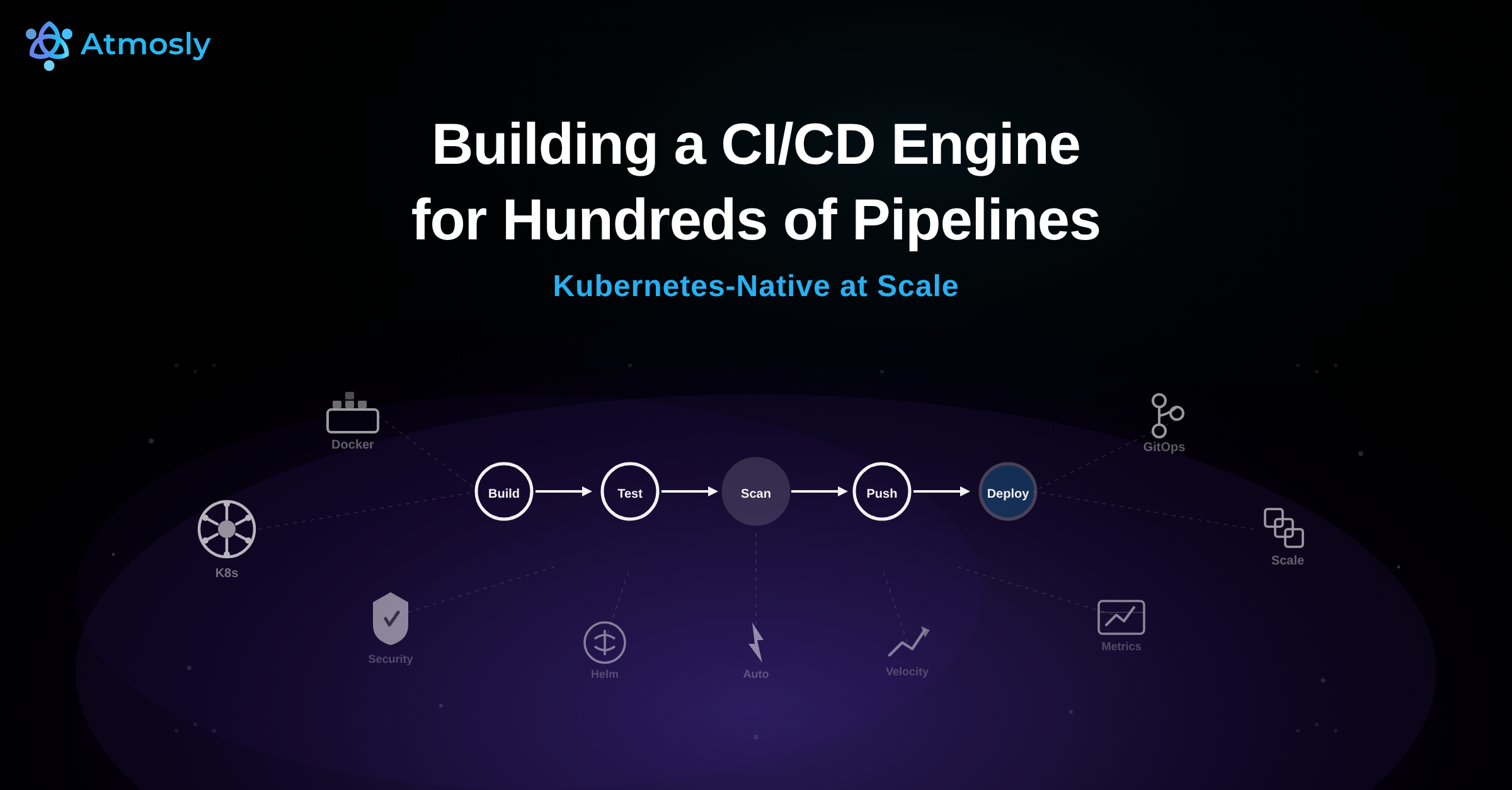

7. Optimize CI/CD pipeline for Cost Efficiency

- Reduce build and deployment frequency where possible to minimize compute costs.

- Use ephemeral environments that spin up only when needed and shut down automatically.

- Optimize testing environments to use on-demand rather than reserved resources.

Conclusion

Kubernetes cost optimization is no longer optional - it's essential for financial efficiency, scalability, and sustainability. As cloud costs rise, businesses must adopt rightsizing, automated scaling, cost monitoring, and waste reduction strategies to maintain control over expenses.

By implementing these best practices, organizations can maximize Kubernetes efficiency without overspending. Now is the time to take proactive steps to ensure a cost-effective and sustainable Kubernetes environment.

Is your organization ready to optimize Kubernetes costs? Start today with Atmosly.